Secure AI Agent Execution: Sandboxes, Guardrails, and the Future of Trustless Code

AI Security & Development

AI agents are no longer just text generators. They can now write, execute, and validate code as part of their workflows. That unlocks powerful use cases in data analysis, automation, and decision-making. But it also brings the riskiest capability we can hand to an AI system: the ability to run arbitrary code.

Code execution is a double-edged sword. Managed well, it accelerates productivity and enables enterprise-scale solutions. Done poorly, it opens the door to privilege escalation, data leaks, and system compromise. That’s why secure execution environments are now at the center of attention for cloud providers, open-source projects, and enterprise teams.

Why Guardrails Are Essential

Letting an AI agent run code means giving it direct influence over systems and data. Without safeguards, the attack surface is wide:

Injection risks: Generated code can include SQL or command injection payloads.

Privilege escalation: Misconfigured roles grant access far beyond what’s intended.

Data leakage: Weak isolation exposes sensitive information.

System compromise: Buggy or malicious code overwhelms resources or alters runtimes.

We’ve already seen this play out. Early “code interpreter” tools allowed arbitrary shell commands without isolation. A simple prompt injection could exfiltrate environment variables and API keys. The lesson is clear: without strong guardrails, enterprise adoption is unsafe.

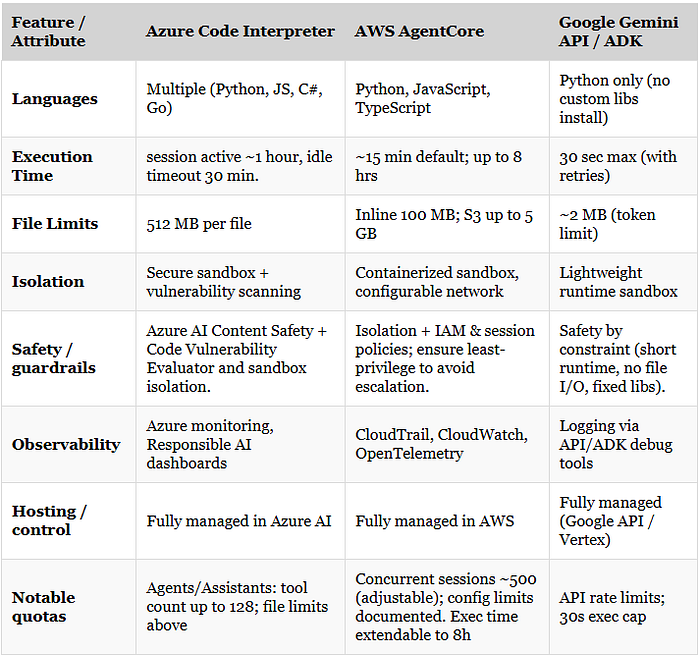

How the Cloud Providers Handle It

Azure Code Interpreter

Azure emphasizes proactive safety:

Content filtering for harmful input

Code vulnerability scanning

Isolated sandbox execution

Best for regulated industries or teams that want built-in safeguards.

AWS AgentCore

AWS optimizes for flexibility and scale:

Containerized sandboxes with configurable network access

Extended runtimes (up to 8 hours)

Large data support (files to 5 GB via S3)

Observability through CloudTrail, CloudWatch, and OpenTelemetry

Powerful, but places responsibility on teams to configure IAM and privileges carefully.

Google Gemini API / ADK

Google opts for safety through constraints:

Python-only execution

Hard 30-second runtime limit

File size capped at ~2 MB

Automatic retries for resilience

Great for lightweight workflows, less so for heavy enterprise workloads.

Comparison

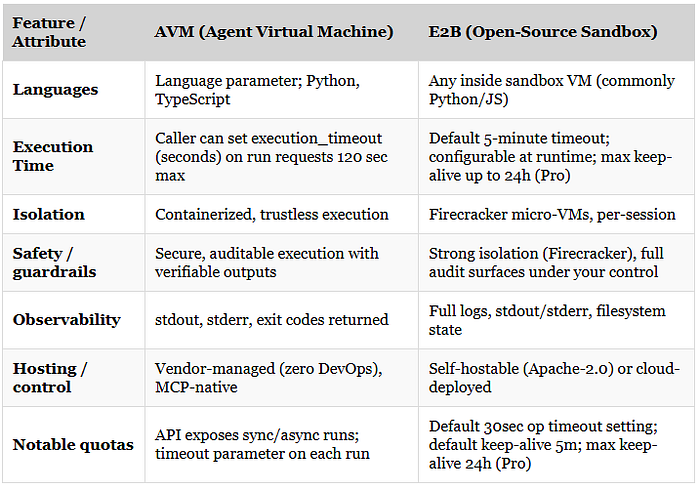

Emerging Alternatives: AVM and E2B

AVM (Agent Virtual Machine)

Built for the “agent economy”:

Trustless, containerized execution

No DevOps overhead (MCP-based API)

Verifiable outputs for compliance pipelines

Think of it as a portable execution layer for multi-agent systems.

E2B (Open Source Sandbox)

Developer-first and self-hostable:

Firecracker micro-VMs spin up in ~150 ms

Full environment control, including packages and internet access

Complete audit trail of logs and file state

Open-source, Apache-2.0 license

Ideal for teams who value transparency and want to avoid vendor lock-in.

Comparison

Security Best Practices

No matter the platform, these are the non-negotiables:

Apply least privilege with IAM roles and network access

Default to offline sandboxes unless online access is essential

Enforce strict execution limits (timeouts, memory, file caps)

Audit everything, and push logs to your monitoring stack

Red team your agents with malicious prompts and payloads

What’s Next: Trustless Execution

The trend is clear:

Cloud providers will continue adding responsible AI guardrails

Independent frameworks will drive portability and transparency

Trustless execution - where every run is verifiable - will become the gold standard

For engineers and security leaders, the mindset must shift. Treat agent execution like any other production workload. Isolation, observability, and governance are the baseline.

Takeaway

Secure execution isn’t optional. It’s the foundation for trustworthy AI systems.

Start with cloud-native interpreters for early experiments. As you scale, move toward portable, auditable sandboxes. And from the beginning, make execution security part of your compliance and monitoring pipelines.

Because in the end, secure AI execution isn’t just another feature - it’s the bedrock for enterprise AI.

Want the full deep dive? Check out my full article on Medium.

🚀 Stay tuned for more posts in AI Security & Development! Follow for more insights on securing AI, cloud, and Web3.

AI Security & Development - AI table of contents included.

Secure AI Agent Execution: Sandboxes, Guardrails, and the Future of Trustless Code